One of the most vexing problems in business is scaling: how to keep a company fast and efficient as it grows bigger. You sometimes hear people say “We need to work more like a start-up!” No big company has ever been able to accomplish that feat. But why is that? What are the mechanisms making bigger companies more complicated to run than smaller ones? This essay unpacks those fundamental dynamics – they are somewhat abstract, but there’s only two and they detect and diagnose scaling issues in our teams and workflows.

“We need to be more like a start-up”

Work in a big company often feels slow and bureaucratic. Even a seemingly simple task, like making a small purchase, easily mushrooms into a struggle with all sorts of people, processes, tools and rules. When those tasks are not core to the work we do, merely something unimportant we need squared away, you hear people say: “We need to be more like a start-up!” Not that they know what it’s actually like to work in a start-up. What they really mean to say is: “Why can’t we just do this thing and be done with it?”

The frustration is real and the sentiment is understandable. Nevertheless it is not desirable for a big company to operate like a small one. It’s also literally not possible. Running a big company like a small one is not something we could do if we just tried hard enough. It has nothing to do with managerial skill or organizational willpower. Companies are complex systems. Bigger complex systems display fundamentally different behaviors than similar but smaller systems.

This essay shows what causes those behaviors to emerge, and how you can recognize them and mitigate their negative impact. As is often the case with a writing project, it started as an attempt to investigate a question and ended up answering a slightly different one. Originally, I wanted to know if it really is not possible to operate at Big in the exact same way as Small – just more bigly. I got my answer (it isn’t) but as a far more interesting bonus I also figured out the two why’s.

Viewed as a system, a company is a collection of productive resources, organized with the goal of producing valuable output. In that sense a bigger company is no different than a smaller one, except that it consists of a larger and more diverse collection of resources. In a knowledge work company, those productive resources are people: the company’s employees. There are other resources, such as tools, computer systems, documents and other artefacts… But for the scaling problem we’re interested in, it’s the people that matter. The starting question is: “What, if anything, is different about a large group of people working together compared to a small group?”

If you look for scaling research, you soon encounter literature on computer systems, which are a good analogy for knowledge work companies. A computer system also consists of a bunch of information-processing resources – CPUs. And every CPU essentially does the same as a knowledge worker: receive information inputs, apply some form of logic on that information, and produce information outputs. It’s interesting to study what happens when you try to scale computer systems, i.e. combine more and more CPUs together to process information into productive output.

The analogy is, of course, imperfect and an abstraction. Human beings differ from computer chips in many ways. Every individual in a company has a unique skill base, while computer systems can consists of thousands completely identical work units. Unlike CPUs, people are subject to something called motivation and don’t necessarily always work at their full productive capacity. We’re not talking about idle time here, when there’s nothing to do for CPU or knowledge worker. Or downtime, when CPU or knowledge worker are defective or sick. But for this discussion, we don’t focus on deficiencies and remedies at the level of the individual carbon or silicon work unit. Fully recognizing that human beings are different than CPUs, it is the work unit aggregation aspect we are interested in. What can we learn from scaling effects in information-processing computer systems, that teach us something about scaling effects in information-processing companies?

“

Like performance optimization, scalability optimization can be a real mystery unless you have an accurate model of how the world works.

“

–Baron Schwartz (Practical Scalability Analysis With The Universal Scalability Law)

Introducing the Universal Scalability Law

Looking for literature on laws describing computer scalability, I bumped into a text covering a broader scope. There’s actually something called the Universal Scalability Law (USL), and it’s exactly what we need. When we scale, we essentially add productive resources to the system to generate more productive output. If that output rose proportional to the resource increase, we wouldn’t be talking about scaling problems. But you don’t always get twice the output if you double the resources. The USL is a model to describe two different forms of “scaling” and “scaling problems”. Here’s two examples to illustrate them both.

> The output we want from a computer system is calculations. The resource we add is more CPUs. As we increase the number of CPUs to process the same amount of calculation requests, how many more calculations are delivered per unit of time?

> The output we want is again calculations. But this time, we add more simultaneous calculation requests to the computer system, while keeping the number of CPUs constant. How does that affect the amount of calculations delivered per unit of time?

The first example is scalability analysis in function of size: how does the output of a system change if we increase the productive resources in that system? The second example is scalability analysis in function of system load, i.e. higher demand being place on an unchanged system. If scaling worked perfectly, you’d get 2x the output if you 2x’ed the input which is called linear scaling. A big company would then basically function just like a start-up, except larger. But as we all know, it often doesn’t work like that, and the USL describes how it does work. Here is the entire USL – to understand it we’ll reconstruct it bit by bit.

![]()

To build up the USL, we start with the simplest possible computer system. It has only 1 CPU, and we used it to generate productive ouput (which I’m going to call “throughput” X to stay consistent with literature on this topic.) The CPU we are considering can produce λ throughput. Expressed as a formula, that becomes:

![]()

If the system scales perfectly linear, every additional CPU will also produce the same amount λ. So we can say:

![]()

![]()

…or in general, for a system with a size of N working units

![]()

If we plot size N on the x-axis and throughput X on the y-axis, this is of course a straight line. The slope of that line is determined by λ, which we can think of as a productivity factor. Some CPUs are better than others and can perform more calculations per second. This higher productive capacity is expressed as a higher λ. Systems with this more productive CPU will have a steeper curve than lower λ systems. But in both cases the curve is a straight line, representing a system that scales linearly.

This is an example of size scaling: we add more CPUs to process the same workload. In our system, where does the benefit of scaling really come from? Our original 1 CPU delivered λ calculations per unit of time. When we added another CPU, they can both calculate λ calculations per unit of time, so we doubled throughput to 2λ. The underlying assumption is that the entire workload can split up in equal parts, for simultaneous execution spread out across all available CPUs. Unfortunately, real world workloads can’t always be divided up that way. If a part of the work still relies on a shared resource that can’t be split up, that work item will need to wait for its turn on the shared resource. That translates to loss of throughput, and the system doesn’t scale linearly.

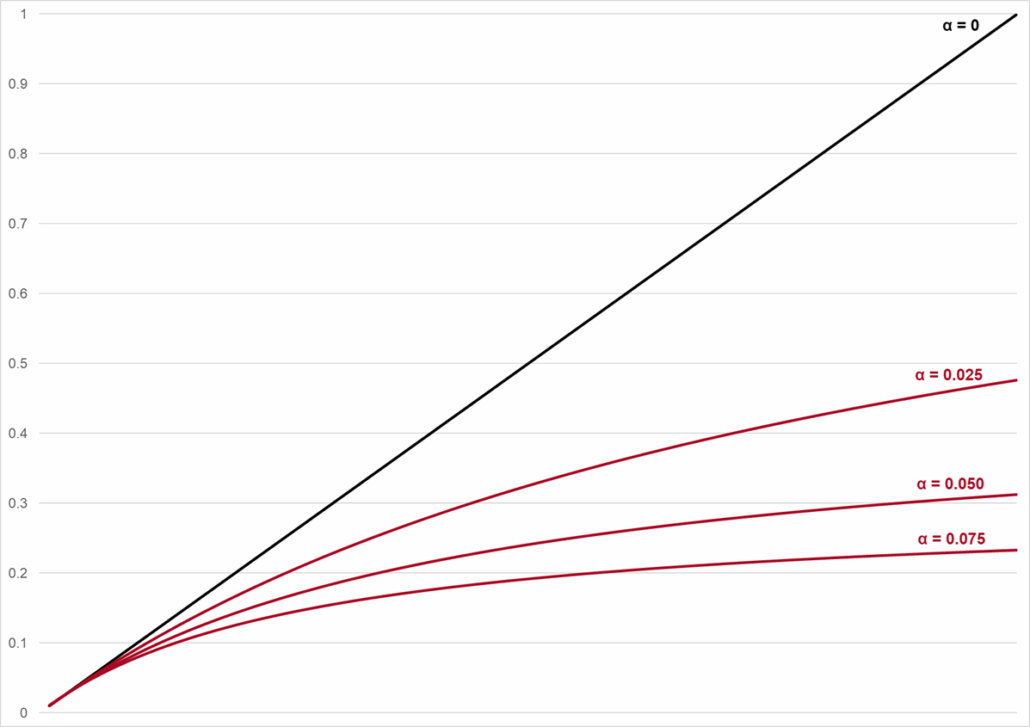

This effect is called contention and it can be modeled in the USL by introducing another variable α as follows.

![]()

(In literature, this is known as Amdahl’s Law.) Visualized, scaling from N = 1 to N = 100, it looks like this.

The good news is that, by adding CPUs, our system generates more throughput. In this stylized example, for the α = 0.025 curve, we produce 50x as much throughput with 100x as many working units. In absolute terms, we produce more. But relative to the number of CPUs, the average throughput per working unit is halved.

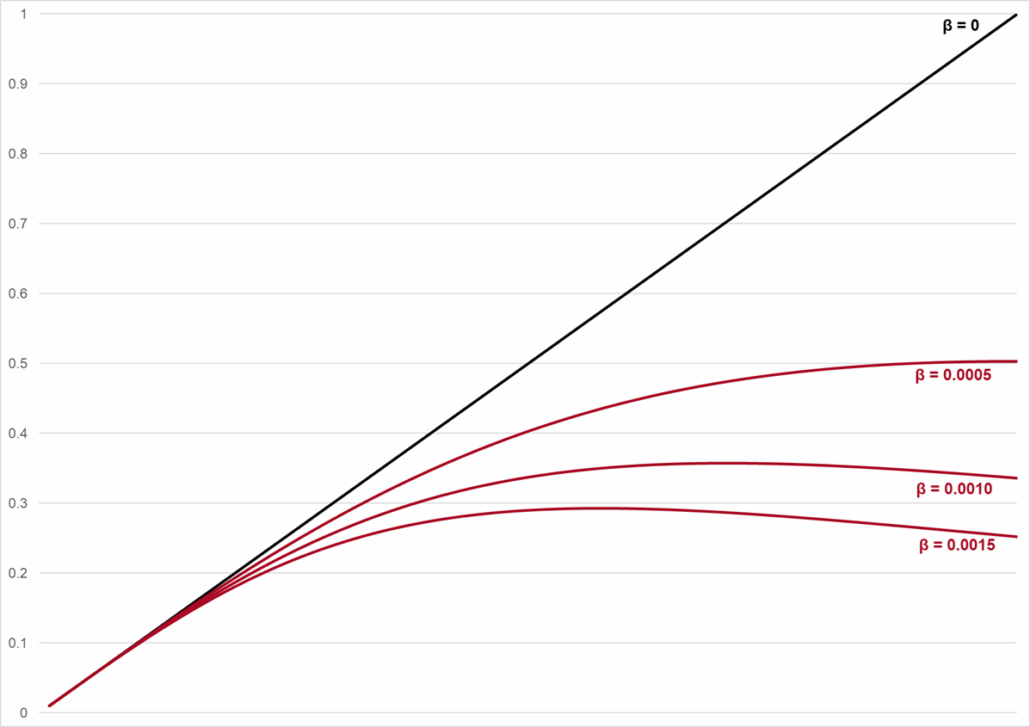

Contention is not our only scaling problem. Suppose we had no contention, and the workload of our computer system could be perfectly divided up in parallel streams. Adding CPUs to such a system will introduce a new type of workload, which the same CPUs need to process: the coordination work of splitting up the production workload, keeping track of which CPU handles which part of it, and reassembling the results of all CPUs into the aggregated final result. If we add resources to a system, we can’t avoid adding some amount of coordination overhead to that system. The USL captures this effect in a coherency factor. Leaving out the contention factor α, the USL would become this.

![]()

And illustrated visually, it looks like this.

“Maybe it works for computers, but does it apply to humans?”

Combining both the contention and coherency effects, we’ve reconstructed the entire USL. In real world systems, throughput doesn’t scale linearly, because a portion of the work will have to wait for shared resources, and because we add coordination work to the system. Plotting the entire USL looks the same as the coherency graph.

Are contention and coherency specific to computer systems, or do they manifest in knowledge work companies too? In other words, is this Scaling Law truly universal? At first sight, the obvious answer is human production systems aren’t different in terms of scaling problems. Knowledge work requires access to shared resources or coordination overhead as much as computer systems do. But knowledge workers are different than CPUs: they typically work on a variety of tasks and responsibilities, and they can often do something else while they wait for access to a shared resource. That is true, and to some degree this recovers productive capacity for the organization. We can organize work such that we make better use of our human “working units”. But it’s not a free lunch, again because of coherency. Let’s unpack in a little more detail how that effect comes back to haunt us in a different way.

We said earlier that the USL describes cases of scaling size (keep workload constant, add productive resources) and cases of scaling load (keep productive resources constant, add more work items to the system). Imagine a system with 1 CPU, which you feed increasingly more work to do. Computers don’t do work sequentially, they multi-task. The CPU will do some work on item 1 for a certain period of time, then park that task and do a bit of work on item 2, and so on. Multi-tasking is why we can run multiple programs on the same computer at the same time, even with only 1 CPU. But all this switching between tasks and keeping track of their status requires coordination… which we already know as coherency. In this regard, we can definitely draw an analogy with an individual knowledge worker. Overloading a knowledge worker with too many work items to handle in parallel, forcing that worker to multi-task, is an enormous productivity killer. For knowledge work systems too, we can’t escape scaling effects. Trying to recover productivity lost to contention only exposes us to more coherency.

“

In real world systems, throughput doesn’t scale linearly, because a portion of the work will have to wait for shared resources, and because we add coordination work to the system.

“

If you don’t like contention, you’re gonna hate coherency.

The USL formula illustrates coherency is the bigger risk of the two, with the productivity loss increasing proportional to size or load squared. Have another look at our coherency graph: you’ll notice there’s a curve where throughput decreases if you increase productive capacity. It is literally possible to make a system perform worse in absolute terms by adding productive resources. The production benefit gets overwhelmed by the additional coordination complexity. Software engineers will recognize this as a form of Brooke’s Law: “Adding manpower to a late software project makes it later“, first coined in his 1975 book The mythical man-month. This is more than an amusing quote: the USL shows this can literally be true and this phenomenon has been observed in multi-CPU computer systems.

In real world knowledge work companies, the multi-tasking related coherency problems can be acerbated by management. As productivity is perceived to suffer or work falls behind schedule, the managerial reflex is to organize review meetings, ask for status reports, etc. The honorable intent is to analyze what’s going wrong, the assumption usually being that the core problem is caused by skill or organizational deficiencies. But all that tracking and reporting of performance data, and spending time in review meetings, takes even more time away from productive capacity. It is management-mandated coherency. Not to throw stones, but this is where Finance people can be incredibly dangerous to the productivity of their organization. I’ve never met anyone in Finance who understands these system effects, and they are typically on the hunt for “idle capacity” to eliminate and reduce cost. Don Reinertsen’s excellent Principles of Product Development Flow explains how productivity typically starts to deteriorate sharply above 70% capacity utilization. Go ask a random Finance person what they think is reasonable capacity utilization: I’d be surprised if they mention a number below 90%. Managers and Finance folks are dangerous to productivity. Practitioners of lean and agile methodologies will know what the correct remedy is to protect against vicious coherency problems: monitor and limit “Work In Progress”.

In short, you really can’t run a large company like a start-up. The USL pointed us to the two fundamental reasons.

“

It is literally possible to make a system perform worse in absolute terms by adding productive resources.

“

Growing up is hard to do – but who wants to be a kid their entire life?

With all this talk about contention and coherency, you’d almost think it’s bad to grow a business. That is of course not the case: scaling knowledge work companies yields benefits too. These economies of scale are well known: fixed cost and specialized cognition (such as centralizing shared services) amortized over a large business base, market and brand power, and capital efficiency and risk pooling. Fundamentally the source of these benefits is always the same: create a shared resource or asset that can be put to productive use over many work units. The trick is to make sure access to these shared assets doesn’t collide. That is not difficult for brand power and risk pooling, but requires more deliberate organizational effort for fixed cost investments and specialized cognition.

Managing contention is easier than coherency because (in theory, at least) contention problems are easier to see. Essentially this is Eliyahu Goldratt’s classical bottleneck. It manifests as work items piling up waiting in front of a shared resource to be processed (e.g. the Legal department has a big backlog of customer contracts to handle.) To actually address your contention problem, there’s basically only one thing to do and that is to create additional capacity at the bottleneck. Coherency problems are much trickier for two reasons. First, they are harder to detect: productivity losses due to context switching and multi-taking of individual knowledge workers is invisible, and excessive overhead manifests as communication – and it is hard to separate “good” communication from “bad” communication. (We’ll come back to that in the final section.) Second, once you’ve detected your coherency problem, they are harder to fix. The solution may be to add resources, such that individual knowledge workers have fewer items to handle reducing the pressure for them to multi-task. But adding resources increases information needs and coordination overhead.

The Universal Scalability Law is no practical silver bullet, but it is a useful mental model. Its concepts and insights ground management literature in areas such as process design, team and org structure, and practices in product development. Here’s two examples. The first is from Beyond Reengineering, a book in which Michael Hammer reflects on the ramifications of the Reengineering process design methods he advocated.

“The consequences of redesigning processes to reduce non-value-adding work are many and significant. The first of these is that jobs become bigger and more complex. One way to appreciate this fact is through the use of an eggshell metaphor. The Industrial Age broke processes into series of small tasks. Think of these as the myriad fragments of an eggshell. To reassemble these fragments into an entire shell requires an enormous amount of glue—and when completed, the reassembled structure will be fragile, unstable, and ugly. Each ragged seam is a potential trouble spot. Moreover, since glue is more expensive than eggshell, the reconstructed shell will be quite expensive. Similarly, when work is broken into small and simple tasks, one needs complex processes full of non-value-adding glue—reviews, managerial audits, checks, approvals, etc.—to put them back together. Those myriad interactions lead to departmental as well as personal miscommunications, misunderstandings, squabbles, reconciliations, telephone calls—headaches too numerous to list. Furthermore, they give rise to handoffs, interstices, and dark corners where errors lurk and overhead costs breed.

The only way to avoid using so much glue is to start with bigger fragments—in other words, bigger jobs[…]”

The eggshell metaphor sits a little awkward to me, but we recognize this passage as the coherency problem. By chopping work up in ever smaller pieces, you vastly increase the required coordination effort. Hammer’s recommended solution is not to overindex on the historical manufacturing template of work specialization. By “bigger jobs”, he means keeping more and diverse tasks with the same individual, which internalizes a lot of coordination and context sharing overhead.

The following paragraphs are from Team Topologies, a book about how to design software organizations.

“requiring everyone to communicate with everyone else is a recipe for a mess” (Chapter 2)

“If the organization has an expectation that “everyone should see every message in the chat” or “everyone needs to attend the massive standup meetings” or “everyone needs to be present in meetings” to approve decisions, then we have an organization design problem. Conway’s law suggests that this kind of many-to-many communication will tend to produce monolithic, tangled, highly coupled, interdependent systems that do not support fast flow. More communication is not necessarily a good thing.” (Chapter x)

“As Fred Brooks points out in his classic book The Mythical Man-Month, adding new people to a team doesn’t immediately increase its capacity (this became known as Brooks’s law). In fact, it quite possibly reduces capacity during an initial stage. There’s a ramp-up period necessary to bring people up to speed, but the communication lines inside the team also increase significantly with every new member. Not only that, but there is an emotional adaptation required both from new and old team members in order to understand and accommodate each other’s points of view and work habits (the “storming” stage of Tuckman’s team-development model)”

“This approach is backed by recent research into high-performing organizations presented in Accelerate:

In teams which score highly on architectural capabilities [which directly lead to higher performance], little communication is required between delivery teams to get their work done, and the architecture of the system is designed to enable teams to test, deploy, and change their systems without dependencies on other teams. In other words, architecture and teams are loosely coupled.”

This too is coherency described in different terms. “More communication is not necessarily a good thing.” Surprising statement in its own right, but key is indeed to separate value-added information flows from overhead noise. Easier said than done, and translating these concepts into practical noise cancelation tools is a good source for many more writing projects on this site.

Bring the Universal Scalability Law into your daily practice – Managerial questions to ask yourself

Here’s some questions to start working on the (surprisingly few) fundamental causes of scaling problems in your organization.

> Is your challenge really organizational, or caused by individual capability? If you have poorly skilled people, train them. If they are underperforming or unmotivated, motivate or replace them.

> Are your workflows sluggish because of shared resources? What are your key centralized or shared teams? If they are constrained and people complain about them, do you side with the complainers trying to “fix” the central team, or do you investigate the counterintuitive (but possibly more effective step) of reorganizing work to alleviate the central team?

> Is senior management a major “shared resource” source of contention, e.g. inserted as approval or decision gates in operational flows?

> What regularly recurring instances of communication you observe are value-adding? Which ones are indicative of overhead management patterns or symptomatic of different pockets of the company trying to stay in sync? What do these sources of noise teach you about the underlying coherency in your production?

> Can you cancel this noise by organizing information flows differently? Can you replace real-time (but hard to schedule) multi-participant meetings with asynchronous written information sharing formats? Can you reduce 1:1 communication by creating pull-type knowledge bases?

Best of success quieting the noise in your organization.

Noise cancellation

Credits

Words

> Stefan Verstraeten

Ideas

> Baron Schwartz wrote a truly fantastic paper on Scalability in 2015. It is hosted here. It’s ten years old, and I saved a personal copy of the PDF in case it ever gets taken down. He also gave a lecture about it in 2018, but the slides are hard to follow and interpret if you haven’t read about USL first. The last slide contains links to other material such as this if you want to geek out further.

> Michael Hammer, Beyond Reengineering: How the Process-Centered Organization is Changing Our Work and Our Lives (1997), published by Harper Business (ISBN 978-0887308802). A slightly outdated but nevertheless thoughtful reflection on contemporary knowledge work. Deserves more than the 3.6 stars it had on Goodreads.

> Matthew Skelton and Manual Pais, Team Topologies: Organizing Business and Technology for Fast Flow of Value, published by IT Revolution. First edition 2019 (ISBN 978-1942788812), second edition 2025. The book proposes an approach to building Software Engineering teams and organizations, and it is not much of a stretch to apply it to other types of complex knowledge work production. It’s predominantly empirical, so it’s interesting to read it with the USL concepts at the back of your head as mental model.

> Software Engineering organizations can be surprisingly good at org design, because the principles driving good software architecture translate well to carbon-based systems. A big idea in this regard are the “loose coupling / tight cohesion” concepts.

Photo

> Header – Marc Arnela, Barcelona https://marcarnela.wordpress.com/acoustics-lab/

> Mercedes Juan Musotto conducting the Santa Monica College Symphony Orchestra, via https://www.thecorsaironline.com/

> in Blow Out (1981), John Travolta plays an audio engineer who accidentally picks up noise that gets him entangled in a complicated plot.

Video

> Baron Schwartz presenting his work on the Universal Scalability Law at DataEngConf NYC 2018. (This is about computer systems, not knowledge workers.)